Machine learning-driven framework for realtime air quality assessment and predictive environmental health risk mapping

Study area and data collection

In this investigation, air quality conditions were simulated using a comprehensive multi-zonal method. Across order to record the ever-changing and multi-faceted character of air pollutants across a wide range of socioeconomic and ecological settings. The technique located and assessed key geographic areas with distinct patterns of pollution, human habitation, and land use. Included in the study were urban, industrial, suburban, rural, and transportation corridor areas, allowing for the monitoring and prediction of a wide range of air quality circumstances.

An accurate description of pollution sources and their interactions with human habitats was achieved by the study through the use of five geographic categories. To start, metropolitan central commercial hubs are part of the Urban Core. Due to factors such as dense population, heavy traffic, ongoing construction, and active economic development, certain areas have elevated levels of airborne pollution. Mining, power generation, and heavy industry are the hallmarks of the second zone, Industrial Area. Within the scope of the model, there are residential and ecological zones that contrast with these hotbeds of industrial emissions and chemical pollutants. Transient settlements on the periphery of cities were reflected in the addition of Suburban Area. In these areas, you can find densely populated residential areas, occasionally some modest industry, and relatively light traffic. The dispersal of pollution from cities to their suburbs can be better understood by looking at suburban landscapes.

Agricultural or forested areas with low population densities are what the Rural Area is all about. For comparative purposes, baseline pollution levels from less developed areas are helpful. Agricultural fires, unregulated traffic, or nearby airborne pollutants could be to blame for their contamination. The high pollution levels in traffic corridors were the last point to consider. During rush hour in particular, the persistent emissions from cars increase the localised concentrations of pollution on main thoroughfares, urban bypass roads, and congested inner-city arterial routes. By comparing these five regions, we can see how air pollution and other environmental factors vary subtly between urban and rural places. An adaptable system for health risk mapping and real-time air quality forecasting is being developed, and this zoning approach is helping to make that happen.

Air quality indicators and sensor network

Air pollution and health issues were modelled and predicted using a strategically located sensor network and a wide range of air quality indicators in this study. To guarantee worldwide applicability and regulatory compliance, the pollutants were selected based on country ambient air quality regulations and guidelines from the World Health Organisation. The target was contaminants that are harmful to humans and ecosystems as a result of urbanisation, industrialisation, and vehicle emissions. Particulate matter 2. 5 and 10 (PM2. 5 and PM10, respectively) require continuous monitoring. Given their potential to enter the bloodstream and trigger serious respiratory and cardiovascular illnesses, these are of the utmost importance. The combustion of fossil fuels releases nitrogen dioxide (NO2), a toxic gas that irritates the airways and reduces lung function. Industrial processes and the combustion of fuels containing sulphur produce sulphur dioxide (SO2), a gas that can irritate the eyes and cause respiratory problems in certain people, particularly the young and the old. Additionally, we examined carbon monoxide (CO), a gas that is both odourless and colourless. Exposure to this gas can impede blood oxygenation and induce acute risks. To sum up, ozone (Ot) serves a purpose in the high atmosphere but does harm when it reaches ground level, where it can trigger photochemical reactions in sunlight and cause symptoms including coughing, chest pain, and airway irritation.

A comprehensive assessment of air quality across zones is provided by these six contaminants, which include both immediate health risks and long-term environmental pressures. Because of their prevalence in urban areas and in industrial corridors, they are well-suited for use in predictive modelling of health risks in real time. These pollutants were tracked and analysed in real time using a variety of data sources. In each study zone, fixed ground-based stations utilised high-accuracy instrumentation from academic and government institutes. Additional sensing systems were calibrated and certified by these reference monitors. A lot of people also used mobile sensing devices to increase coverage in some areas. Public transportation vehicles, garbage trucks, and unmanned aerial vehicles (UAVs) equipped with Internet of Things (IoT) air quality monitors monitored the daily patterns of pollutant dispersion.

Critical context was provided by satellite data on aerosol optical depth, surface temperature, humidity, and wind speed. Such reliable information was supplied by the MODIS satellite constellation of NASA and the Sentinel satellite constellation of ESA. Such features would allow models to take into consideration atmospheric variables that impact the concentration and transit of pollutants. Additional data utilised in the study came from volunteers through AirVisual and PurpleAir. By facilitating the installation of air quality monitors in private residences and public areas, these systems enable volunteers to augment official databases with hyper-local data. While crowdsourced data isn’t as precise as devices designed for regulatory purposes, it does promote community participation and spatial interpolation over areas with few sensors. All sources were geotagged and time-stamped by GPS in order to bring data into spatial alignment. With only 15 min of data, we were able to create risk maps and provide real-time predictions. Actionable environmental intelligence and a scalable and resilient machine learning framework were given via an integrated sensor network.

Prior to modelling, a solid preparation process verified the dataset’s validity and stability. Cleanup, standardisation, and improvement of data for spatial and temporal consistency is required from a variety of sources, including fixed ground stations, mobile sensors, satellite feeds, and crowdsourced platforms. In order to enhance the prediction capabilities of machine learning models, this phase eliminated noise, filled in data gaps, and identified important attributes.

The first step of preprocessing was to identify and remove outliers because they could distort model predictions and reduce accuracy. Two approaches were used: the IQR and the Z-score. While the IQR method found values significantly outside the interquartile range (Q1–1. 5IQR or Q3 + 1. 5IQR), the Z-score identified values greater than three standard deviations from the mean. False positives and other abnormalities in the sensor data were eliminated using this two-layer approach. At PM2. 5 levels over 500 µg/m3, which surpasses atmospheric limitations, the analysis became invalid. The downstream simulation became more reliable after this purification phase, which included real pollution concentrations.

Disruptions to environmental datasets might occur due to unavailability of sensors, network problems, or transmission delays. To repair these gaps without harming the time-series dataset, two-tier imputation was employed. Gaps less than two hours were filled using linear interpolation. This technique preserved the temporal continuity of the data by calculating missing values using their immediate predecessors and successors. For longer interruptions, though, a more nuanced approach was required. For these, we used the KNN imputation method. In order to fill in the blanks, we picked the most comparable data points in terms of location (from geographically close sensors) and time (from similar historical data). By reducing bias and critical variance oversmoothing, the hybrid interpolation-KNN method achieved a balance between simplicity and contextual awareness.

Following data cleansing and gap filling, feature engineering was employed to extract additional data from datasets. A larger input space and more sophisticated predictions were made possible by the engineered features of the model. In accordance with the standards set by the Central Pollution Control Board, the raw measurements of pollutants were classified into different Air Quality Index (AQI) levels. The results were in line with public health recommendations and were easy to understand.

Critically important was the development of pollutant dispersion indices that accounted for wind. By combining pollution observations with data on wind speed and direction, the algorithms could improve their estimates of the spatial mobility of airborne contaminants. We supplemented it with temporal features like day of the week, seasonal changes, and time of day. This data shows patterns in pollution, such as peak hours and seasonal particle levels.

The human impact of pollution was put into context using demographic data. The overlays included percentages for the old, children, and population density. In particular, variables helped in risk map modification and vulnerability assessment in order to address public health goals. Pretreatment procedures yielded a clean and organised dataset, perfect for predictive modelling. Reliability, interpretability, and robustness were achieved by the machine learning-driven air quality technique developed in this work by careful feature engineering, missing value imputation, and careful removal of inconsistencies.

Machine learning framework

The study’s prediction architecture uses a carefully selected set of machine learning algorithms to handle data on air quality in real-time and the health impacts of that data. The transparency, adaptability, and accuracy of risk assessments and pollutant concentration calculations are all enhanced by ensemble models. We began with the Random Forest (RF) method because of its success with high-dimensional, non-linear environmental data. The goal of constructing many decision trees and averaging their outputs is to prevent overfitting and provide consistent projections across all pollution situations. Apt for simple regression procedures, such as calculating average pollution levels (PM2. 5, NO2, etc.) from several locations. To record fluctuations in air quality, they employed Gradient Boosting Regression Trees (GBRT). Each tree in a GBRT model strengthens a weak model. When it comes to tracking air, water, and noise pollution, this progressive learning approach works like a charm. Increased sensitivity to hourly or daily changes in air quality is the result.

The system learns from time-series data using LSTM networks. Some long short-term memory (LSTM) networks can remember the dependencies and sequential input patterns for a long time. Because air pollution cycles due to seasonal changes, temperature variations, and cumulative emission impacts, this expertise is necessary for environmental modelling. Pollution surges and daily/weekly patterns were well managed by LSTM models. In order to classify AQI in real time, XGBoost was integrated into the model ensemble. A parallel processor, XGBoost, an improved gradient boosting method, and a feature regularise. Plus, it deals with missing values. Accurate and rapid predictions are made by the algorithm. We gain from real-time forecasts like pollution threshold alerts. Smart city infrastructure can benefit greatly from its real-time response and scalability across massive datasets.

Shapley Additive Explanations (Shapley) is a cooperative game theory-based method for interpreting models that enhances this design.

To better understand how factors like wind speed, temperature, and time of day influence the predicted air quality index (AQI) or pollutant concentration, SHAP dissects prediction results by input feature. Being transparent is crucial when interacting with stakeholders that require predictions and sources, such as public health officials, city planners, and the general public. We trained all of the framework models using a massive historical dataset that included pollution records, weather data, and demographic overlays. We optimised performance through validation and tuning, and we integrated live data streams for real-time operation. In order to adjust to new patterns in the environment and updated sensor infrastructure, the design allows for frequent retraining.

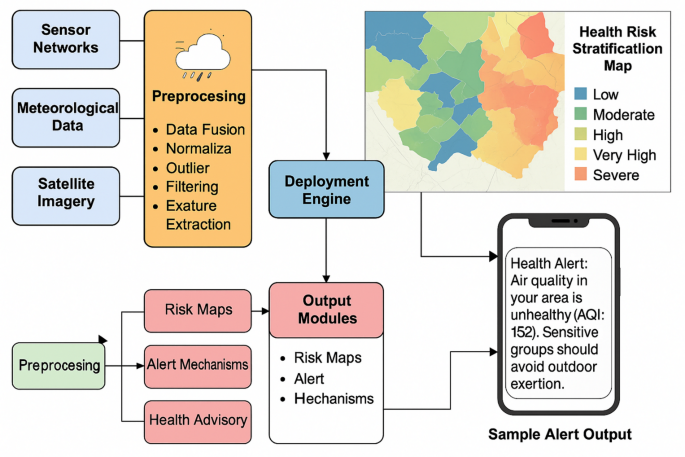

Architecture of the machine learning—diven framework.

The architectural design of the system and the data flow from collection to public warning broadcast are shown in Fig. 1. It reveals the interconnections and levels of the structure. The Data Acquisition Layer is responsible for gathering information from various sources. Satellite imagery for aerosol presence and air clarity, meteorological data sources for current weather updates, and sensor networks in fixed locations around the study area are all part of this. By combining these datasets, we can build an exhaustive input profile. After that, all incoming data is prepared for analysis in the preprocessing Block. Included are data fusion from various formats and sources, scale normalisation, outlier filtering, and feature extraction to construct pollution ratios, hourly markers, and wind-adjusted dispersion indices, among other pertinent variables. These techniques guarantee that models can take in clean, organised data from anywhere.

The core of the system, the Deployment Engine, houses the trained ensemble models. The air quality index (AQI), health risk scores, and pollution forecasts are all produced by this engine in real time. The Output Modules, which include of risk maps, alert systems, and health advisories, receive their outputs from this engine. Danger Risks of exposure and pollution by region are shown on the maps. Using zones that are colour-coded from low to extreme risk, these dynamic maps are created. In vulnerable locations with high concentrations of elderly people, alert systems are set off when predicted AQI levels exceed safe limits. Based on the area’s demographics and pollution levels, health advisories recommend staying indoors or limiting outdoor activities.

Figure 1 also shows how these outputs are delivered to end-users. One path leads to a Health Risk Stratification Map, which displays coloured geographic zones labelled as Low, Moderate, High, Very High, or Severe. This map provides authorities with a clear picture of spatial risk distribution. Another output is directed to mobile platforms in the form of real-time notifications. A sample message reads: “Health Alert: Air quality in your area is unhealthy (AQI: 152). Sensitive groups should avoid outdoor exertion. ” This highlights the user-facing aspect of the system, ensuring timely and personalised communication. The architecture depicted in Fig. 1 demonstrates how raw environmental data is transformed through a pipeline of preprocessing, model-based prediction, and intelligent dissemination. The modularity of the system allows it to be scaled across cities and adapted for varying environmental conditions. Its ability to provide real-time, interpretable, and health-relevant insights makes it a valuable tool for advancing environmental resilience and public health preparedness.

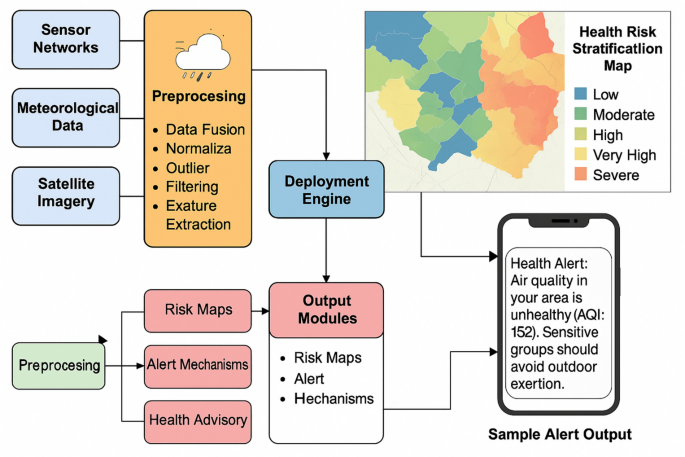

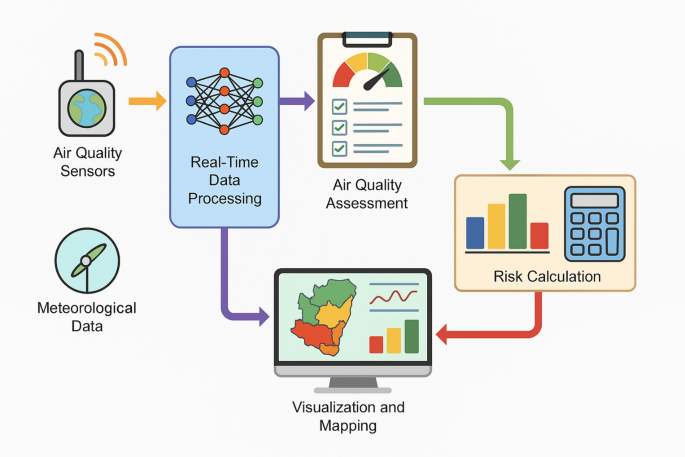

Figure 2 illustrates the end-to-end flow of how real-time environmental data is transformed into actionable health risk insights. The process begins with the continuous input of data from sensor networks, meteorological databases, and satellite imagery. These raw data streams are fed into a preprocessing module, where essential tasks such as data fusion, normalization, outlier detection, and feature extraction are performed to ensure consistency and reliability. The cleaned and enriched data is then passed into the deployment engine, which houses the trained machine learning models responsible for generating pollution forecasts and health risk scores. Personalised alerts and dynamic risk maps are two of the many output modules that get these signals. The findings are presented through graphical risk stratification maps and health alerts sent to mobile devices. Air quality responses and proactive public health management are made possible by the framework’s fast and accurate data processing.

Flow of real-time data and risk calculation.

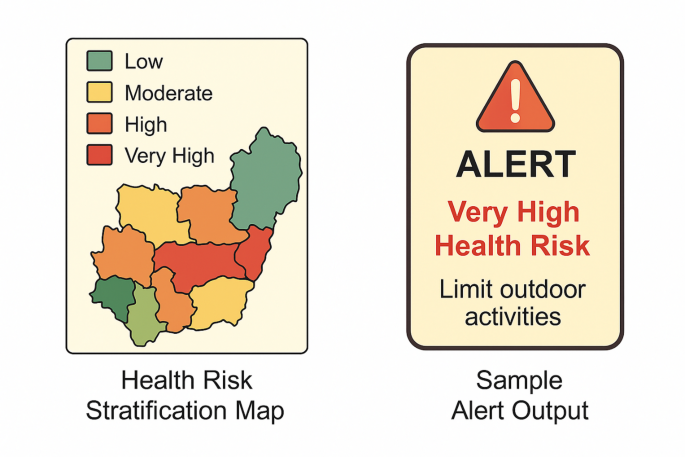

Health risk stratification map and sample alert output.

Figure 3 presents a dual-pane visualization that captures the final outputs of the predictive air quality and health risk assessment framework. On the left side of the figure, a colour-coded health risk stratification map illustrates the spatial distribution of air pollution-related health threats across different geographic zones. Each zone is categorised as Low, Moderate, High, Very High, or Severe according to a composite health risk assessment that takes into account pollutant concentration, exposure length, and population vulnerability. Using this visual tool, decision-makers may identify critical issues. Figure shows alert output from mobile devices on the right side. The alert message provides health advice, including staying indoors, and clearly indicates the air quality index (AQI). Timely warnings and preventative measures are provided by this real-time alert system, assisting sensitive groups in making educated decisions that prioritise health.

Model training and hyperparameter tuning

The reliability and generalisability of the machine learning model were guaranteed by a rigorous training and tweaking approach. We used a random distribution to split the dataset in three parts: 70% for model training, 15% for validation and tuning, and 15% for testing and evaluation after preprocessing and feature engineering. To ensure that testing results are not skewed, the validation set guides hyperparameter modifications, allowing for fair model development. The hyperparameter was fine-tuned using ten-fold cross-validation. This technique iteratively trained and evaluated the model on nine portions of the training set and then on the other, dividing the set into ten equal portions. An effective metric for reducing overfitting in models was average fold performance. A 12-time step input window was utilised since LSTM networks are sensitive to sequence tuning. Twelve data points were used by each LSTM model to forecast pollution. Deep learning models were enhanced using dropout layers to mitigate overfitting. This regularisation method enhances the model’s generalisability during training by randomly deactivating neurones.

Environmental risk mapping

We set out to forecast pollution levels and their impact on human health in this study. Environmental data was transformed into spatially-resolved public health insights through the use of multi-layered risk mapping in the study. Better geographic information system (GIS) visualisation and a merged Health Risk Index (HRI) made that possible. Rapid identification and reporting of environmental health hazards were made possible by these techniques.

Medical risk evaluation instrument

Air pollution poses health risks to different regions, and the HRI took both into account. An HRI was determined by three primary factors. To begin, the pollutant exceedance factor determined the extent to which each pollutant level went above and beyond the acceptable range internationally and domestically. Data on air quality was connected to rules via this part. Secondly, the location of the pollution source and the population density were the two factors that were used to compute the population’s exposure.

People living in close proximity to major sources of air pollution, such as cities or highways, were shown to be more exposed. The inclusion of both pollution and injured persons in the index was ensured by this component. The third and equally important factor was vulnerability weight, which encompassed demographic metrics such as age distribution and the prevalence of co-occurring diseases like cardiovascular disease, asthma, and chronic obstructive pulmonary disease (COPD). The risk index contribution was increased for populations with a higher prevalence of elderly, children, or pre-existing health conditions. After the calculation, the HRI values were normalised on a scale from 0 to 1. Scores close to 1 indicate critical high-risk areas that need treatment right once, while scores of 0 suggest no health risk at all. This standardisation allowed for the comparison of zones and across time, which facilitated the rapid identification of environmental health concerns.

Map-based display

To make the spatial representations of the HRI model outputs and pollutant predictions more understandable, GIS technologies were utilised extensively. High-resolution, multi-layered visualisations were created using ArcGIS and QGIS. Officials and citizens alike were able to better grasp the situation with the aid of these maps. Pollutant concentration heatmaps, for starters, revealed how widespread and intense the toxins were in the study area. The pollution levels were displayed on these heatmaps as green, indicating acceptable air, and red, indicating harmful concentrations. The second image depicted health risk zones determined by HRI. Using data on exposure, demography, and the environment, these maps categorise areas according to the danger they pose to human health. They used a gradient colour system, similar to the heatmaps, to show the urgency of zone interventions; green denotes low danger and red high risk. Environmental justice issues, such as disproportionate exposure of vulnerable communities to pollutants, were assisted to be discovered by the maps. Finally, risk models showed how air quality and health concerns evolved over time. These 24-hour sliding pane animations showed pollution trends like morning traffic peaks and nighttime industrial emissions. This time-sensitive visualisation helped local authorities estimate high-risk occasions to assign disaster response resources.

These GIS tools can turn complex environmental facts into geographical insights. Technical models and practical visualisation helped decision-makers adopt targeted public health alerts, short-term mitigating actions, and long-term environmental planning.

Integration with demographic and traffic data

To make the air quality forecasting system more realistic and reliable, we incorporated demographic and real-time traffic data. In order to pinpoint populations at high risk and get accurate emission estimates, this integration was crucial. In urban and industrial settings with uneven exposure hazards, the system became more sensitive to real-world scenarios by integrating environmental indicators with human and activity-based variables.

Overlays on demographics

Overlayed onto pollutant concentration grids were demographic records from the census at the block level in order to determine population vulnerability. The system detected pollution levels and evaluated their expected effects on certain groups using this spatial overlay. Particular attention was paid to the percentage of the elderly, children under the age of 12, and those with asthma or cardiovascular disease. The health risk index made use of these demographics after vulnerability-weighting. This means that areas with high concentrations of pollutants and susceptible populations may be the ones that the model prioritises for sending out public health alerts and emergency plans.

Industrial and traffic-related emissions

Industrial emission records, demographic data, and data on real-time traffic flows were all used as predictive factors in the model. The data on traffic, average speeds, and intensity of flow were provided by Google Traffic APIs in real-time. This data pinpointed major areas where vehicle emissions are highest, both on roads and in urban corridors. Stationary emission sources, such as power plants, refineries, and factories, were mapped using data from municipal pollution control boards or environmental compliance records. Pollutant type and volume were used to assign emission weights to these geotagged sources. By incorporating both stationary and mobile emission predictors, the model enhanced spatial granularity and accounted for temporal changes in pollution patterns.

Improving the framework’s contextual intelligence was a breese after adding data on emission activities and demographic overlays. It improved the model’s ability to forecast the location of pollution and identify the most susceptible populations, allowing for more equitable and effective management of environmental health risk.

Pilot results: pollution distribution

Historical air quality records from regional monitoring organisations were used to build statistical distributions for synthetic data (n = 100). Before live deployment, the synthetic dataset simulated pollution concentrations and validated the framework’s geographical and temporal behaviour. Table 1 presents a comparative bar chart illustrating the average concentrations of six major air pollutantsPM2. 5, PM10, NO2, SO2, CO, and O3 across five distinct environmental zones: Urban Core, Industrial Area, Suburban, Rural, and Traffic Corridor. The data clearly highlight significant spatial variation in pollutant levels, with the Industrial Area and Traffic Corridor showing the highest concentrations across most categories. For instance, PM10 and CO are notably elevated in traffic-dense regions, whereas PM2. 5 and NO2 concentrations peak in industrial zones. Table 1 provides the corresponding numerical values that support the visual trends shown in the figure. These results confirm that urbanization and vehicular density are major contributors to poor air quality. The data also validate the need for spatially sensitive modeling, as the health risks associated with pollution exposure vary widely by location. This visualization supports targeted interventions and prioritization in air quality management efforts.

Deployment and real-time updating

With Apache Kafka, we were able to continuously transmit environmental data and reliably receive data from a large number of sensor nodes. Safely storing, evaluating, and making these data streams available for machine learning inference was Google Cloud Platform (GCP). In this cloudy setting, trained ensemble models evaluated air quality trends rapidly. Flask was used for the backend and React for the UI to build an interactive dashboard. On this dashboard, you may see real-time visualisations, danger maps, and automated public notifications. With data processing and forecast updates occurring every five minutes, the system could react swiftly to changes in the environment. This deployment strategy was able to generate scalable, actionable information regarding air quality in a short amount of time.

Using this comprehensive approach, one may build a system for real-time air quality forecasting and health risk mapping that is dynamic, interpretable, and scalable. Researchers, public health organisations, and municipalities can all benefit from the framework’s decision-support features, which combine health informatics with spatial analysis and machine learning. Next, we’ll use health impact data and real-time sensor deployments to test the model in real-world circumstances.

link